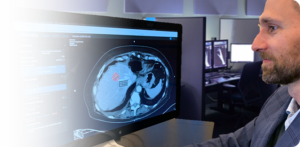

Cancer Reads,

Twice as Fast

AI Metrics cuts read times in half by using artificial intelligence to improve the efficiency and accuracy of cancer reads.

AI Metrics Delivers:

2x Faster Reads

99% Reduction in Major Errors

Automated Reports

Reduced Radiologist Burnout

Say Goodbye to Burnout

Radiologist burnout is a serious problem for healthcare organizations. Not only does it impact productivity and retention, but chronic stress in the workplace can lead to mental health issues, too.

In a recent survey of U.S. physicians, 49% of radiologists said they’re experiencing burnout. Among the top reported causes: Too many tasks and long hours at work.

AI Metrics helps radiologists solve these burnout-inducing problems with:

Faster Cancer Reads: By reducing the effort required to complete complex cancer reads, radiologists can cut read times in half.

AI Guidance: AI Metrics serves as an extra set of eyes for radiologists, leveraging the power of artificial intelligence to improve accuracy and efficiency.

Simplified Workflows: The current process of completing cancer reads is tedious and inefficient – requiring constant switching between exams, reports, and worklists. Our intuitive, easy-to-use workflows allow radiologists to keep their eyes on the patient at all times, reducing stress and effort.

Automated Reporting: AI Metrics seamlessly and automatically generates patient reports while the radiologist conducts the study. These clear, visualized reports are preferred by 100% of oncologists over standard text-based options.

"This will revolutionize our efficiency and accuracy"

Anthony Paul Trace, MD, PhD

Hampton Roads Radiology Associates

Radiology resources

Stop Worklist Cherry-Picking and Lighten your Radiologist Workload

When sorting through your to-do list, it’s human nature to save the worst tasks for last. Radiologists are no different. As the queue of pending reads stacks up and the radiologist workload grows, the more routine, simple reads on the worklist are completed first. But this process of worklist cherry-picking can create major workflow problems

How To Complete a Cancer Read in Under Two Minutes

For radiologists, cancer analysis and reporting represents one of the most complex, time-intensive tasks in their day. So when we say that AI Metrics can help radiologists cut cancer read times in half, it’s understandable that we sometimes encounter skepticism. However, there’s no exaggeration behind our claims of dramatically reducing read times for cancer patient

Developing Trust

As Originally Published in Radiology Today While AI was initially utilized in academic settings for research, the realization that AI solutions can automate and/or standardize specific complex image interpretation tasks in high-value workflows has driven acceptance in clinical settings. For example, within the past few years, AI in CT imaging has gained considerable acceptance. The

Cut Cancer Imaging Read Times in Half

When we founded AI Metrics, our guiding philosophy was simple: To help radiologists move twice as fast through the evaluation of advanced cancer patients. Now, after years of intense development, our AI-enabled radiology software is doing just that – consistently delivering cancer imaging reads in half the time. In this post, we’ll share how AI

What’s next in cancer imaging?

As Originally Published on AuntMinie.com Cancer is the second leading killer of Americans, and that means that nearly every single reader of this article will be (or have a family member) impacted by cancer. This year, nearly 1.9 million people will be diagnosed with cancer in the U.S.,1 a figure that has been growing consistently over the

AI Metrics Research Received “Best of AJR” Award by the American Journal of Roentgenology

AI Metrics, an early stage radiology software company, announced that the company’s recently published research in the American Journal of Roentgenology (AJR) has been awarded the Best of AJR in the Gastrointestinal Imaging section for 2022. AI Metrics co-founder Dr. Andrew Smith developed an innovative digital biomarker for accurately detecting and staging liver fibrosis and

Simplify Cancer Reads with AI Metrics

Ready to reduce radiologist burnout while delivering better, faster reads? Schedule a software demo today.